This approach allows the quantification of the average information value of nucleotide positions, which can shed light on the coevolution of the canonical genetic code with the tRNA-protein translation mechanism.Īmino acids Genetic code Information theory RNA translation Shannon entropy.Ĭopyright © 2016 Elsevier Ltd. The relative importance of properties related to protein folding - like hydropathy and size - and function, including side-chain acidity, can also be estimated.

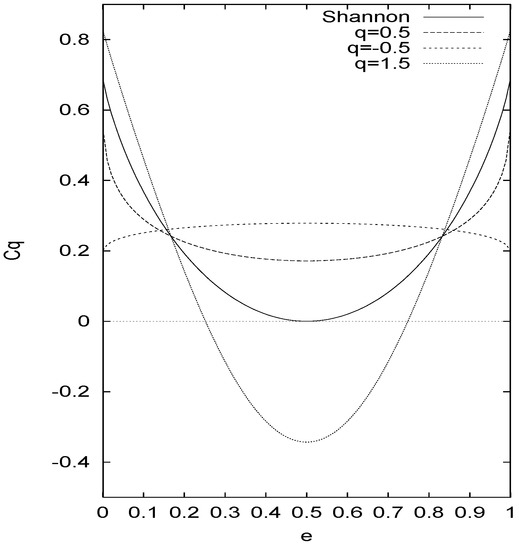

By calculating the normalized mutual information, which measures the reduction in Shannon entropy, conveyed by single nucleotide messages, groupings that best leverage this aspect of fault tolerance in the code are identified. Each alphabet is taken as a separate system that partitions the 64 possible RNA codons, the microstates, into families, the macrostates. To evaluate these schemas objectively, a novel quantitative method is introduced based the inherent redundancy in the canonical genetic code. This fundamental insight is applied here for the first time to amino acid alphabets, which group the twenty common amino acids into families based on chemical and physical similarities. The concept of information entropy was introduced by Claude Shannon in his 1948 paper A Mathematical Theory of Communication, and is also referred to as. The Rényi entropy is a generalization of the Shannon entropy to other values of q than unity. In machine learning the Shannon index is also called as Information gain. As with thermodynamic entropy, the Shannon entropy is only defined within a system that identifies at the outset the collections of possible messages, analogous to microstates, that will be considered indistinguishable macrostates. When there is only one type in the dataset, Shannon entropy exactly equals zero (there is no uncertainty in predicting the type of the next randomly chosen entity). SHANNON INTRODUCTION T HE recent development of various methods of modulation such as PCM and PPM which exchange bandwidth for signal-to-noise ratio has intensied the interest in a general theory of communication. I hope this post helps you to see tools and methodologies one can use to find out unusual activity strictly based on the DNS traffic.The Shannon entropy measures the expected information value of messages. Shannon entropy as special Instead of focusing on the entropy of a probability measure on a finite set, it can help to focus on the 'information loss', or change in entropy, associated with a measure-preserving function. A Mathematical Theory of Communication By C. Sourcetype="isc:bind:query" | eval list="mozilla" | `ut_parse(query, list)` | `ut_shannon(ut_subdomain)` | table ut_shannon, query | sort ut_shannon descĪs you can see in the results here, the high score come from tunnels made to the domain as well as something which is unknown but could also be a tunnel: traffic towards If we run the following query, interesting results are shown: Catching DNS tunnels from subdomains in URLs If we click on the ut_shannon field to sort in reverse order, this is what you could get:Īs one can see, words of low characters distribution get a low score. Which can be run directly from any word you can have in Splunk:Īs you can see, the score is pretty high, which makes sense since there is a high variety of frequency over those data. The previous paragraph can easily be translated into the following Python code (taken from the excellent URL Toolbox on Splunkbase:Įntropy -= p * math.log(p, 2) # Log base 2 This entropy on the chosen word is defined as the average of the output weighted on the probability of occurrence of the characters. There will be an entropy for each character. If you consider a word, being a discrete source of the finite number of characters type which can be considered, for each possible character there will be a set of probabilities which would produce various outputs. He invented a great algorithm known as the Shannon Entropy which is useful to discover the statistical structure of a word or message. Shannon wrote the paper “ A Mathematical Theory of Communication“, which I strongly encourage to read for its clarity and amazing source of information. By Jacobs, Konrad -, CC BY-SA 2.0 de, Link

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed